Prompt playground

The prompt playground is a tool for exploring, comparing, and evaluating prompts. The playground is deeply integrated within Braintrust, so you can easily to try out prompts with data from your datasets.

The playground supports a wide range of models including the latest models from OpenAI, Anthropic, Mistral, Google, Meta, and more deployed on first and third party infrastructure. You can also configure it to talk to your own model endpoints and custom models, as long as they speak the OpenAI, Anthropic, or Google protocol.

We're constantly working on improving the playground and adding new features. If you have any feedback or feature requests, please reach out to us.

Creating a prompt session

The prompt playground organizes your work into sessions. A session is a saved and collaborative workspace that includes one or more prompts and is linked to a dataset.

Sharing prompt sessions

Prompt sessions are designed for collaboration and automatically synchronize in real-time.

To share a prompt session, simply copy the URL and send it to your collaborators. Your collaborators must be members of your organization to see the session. You can invite users from the settings page.

Writing prompts

Each prompt includes a model (e.g. GPT-4 or Claude-2), a prompt string or messages (depending on the model), and an optional set of parameters (e.g. temperature) to control the model's behavior. When click "Run" (or the keyboard shortcut Cmd/Ctrl+Enter), each prompt runs in parallel and the results stream into the grid below.

Without a dataset

By default, a prompt session is not linked to a dataset, and is self contained. This is similar to the behavior on other playgrounds (e.g. OpenAI's). This mode is a useful way to explore and compare self-contained prompts.

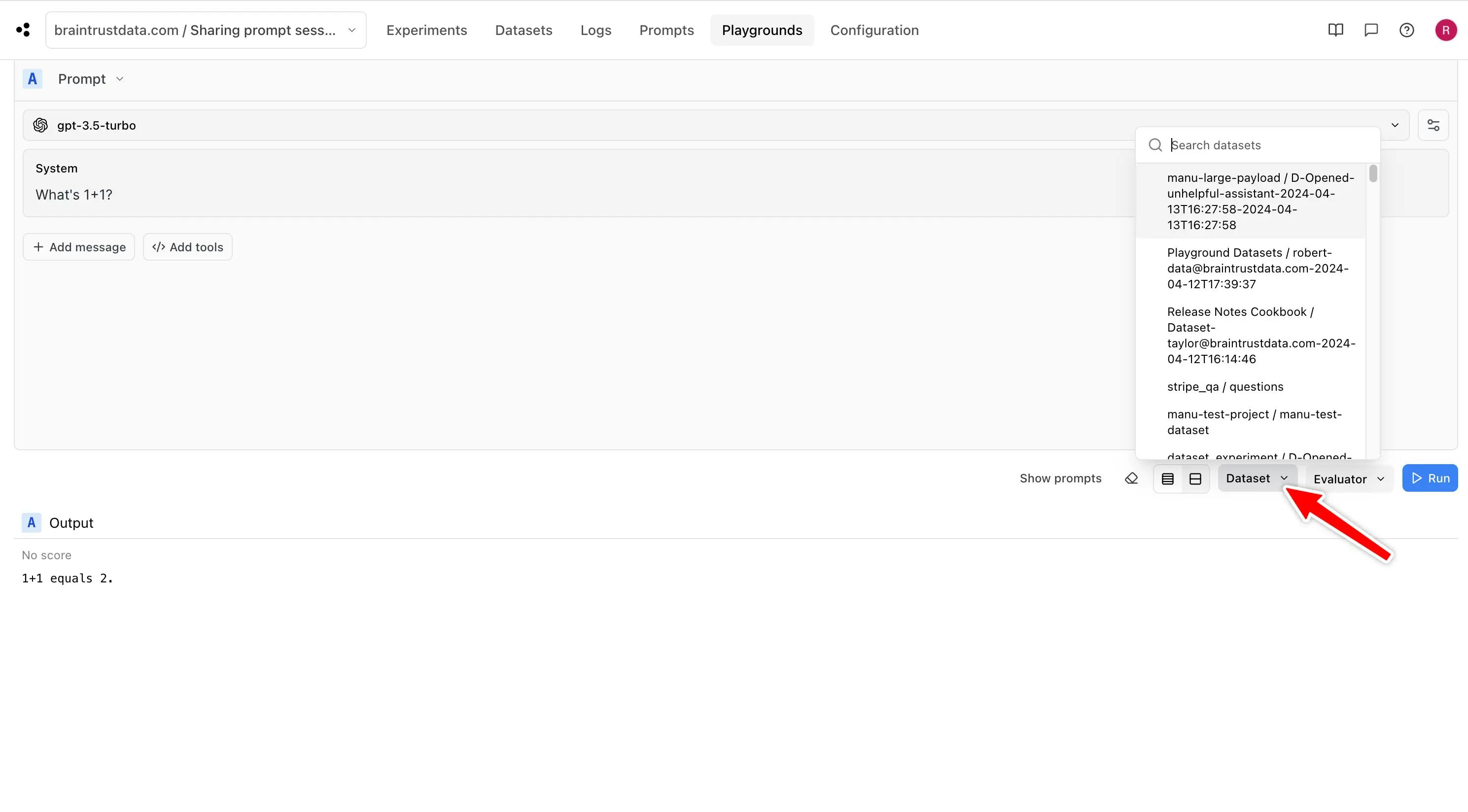

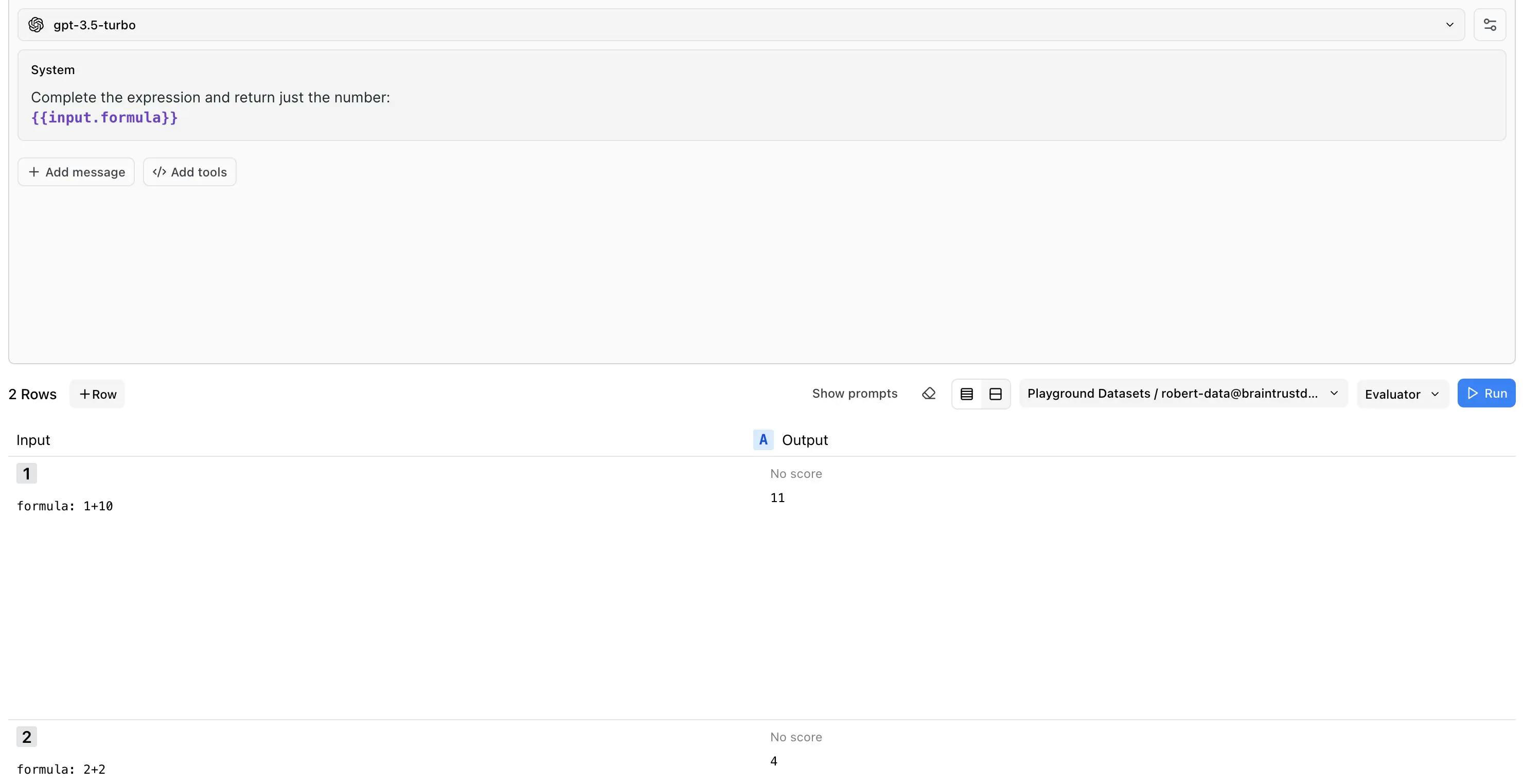

With a dataset

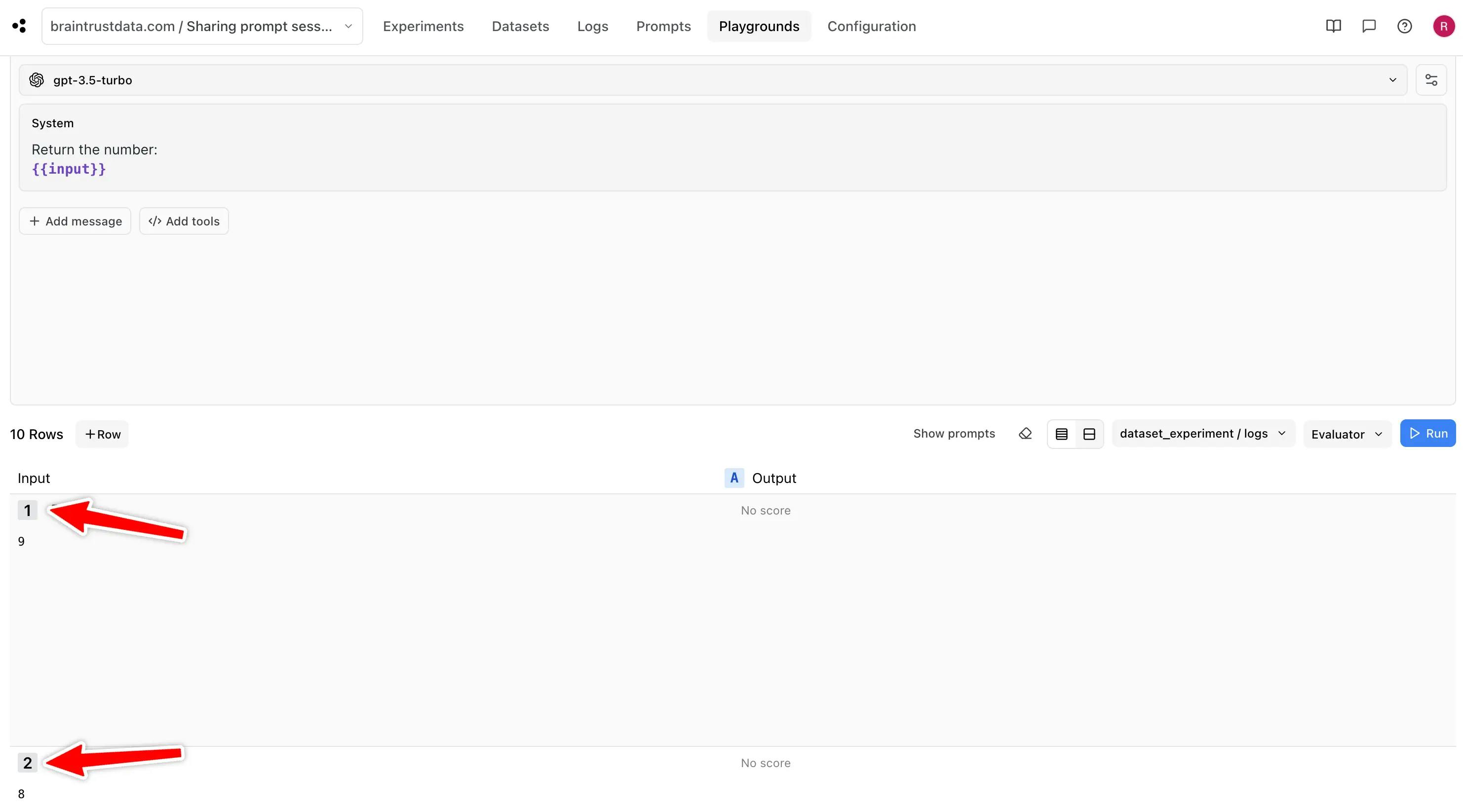

The real power of Braintrust comes from linking a prompt session to a dataset. You can link to an existing dataset or create a new one from the dataset dropdown:

Once you link a dataset, you will see a new row in the grid for each record in the dataset. You can reference the

data from each record in your prompt using the input, expected, and metadata variables. The playground uses

mustache syntax for templating:

Each value can be arbitrarily complex JSON, e.g.

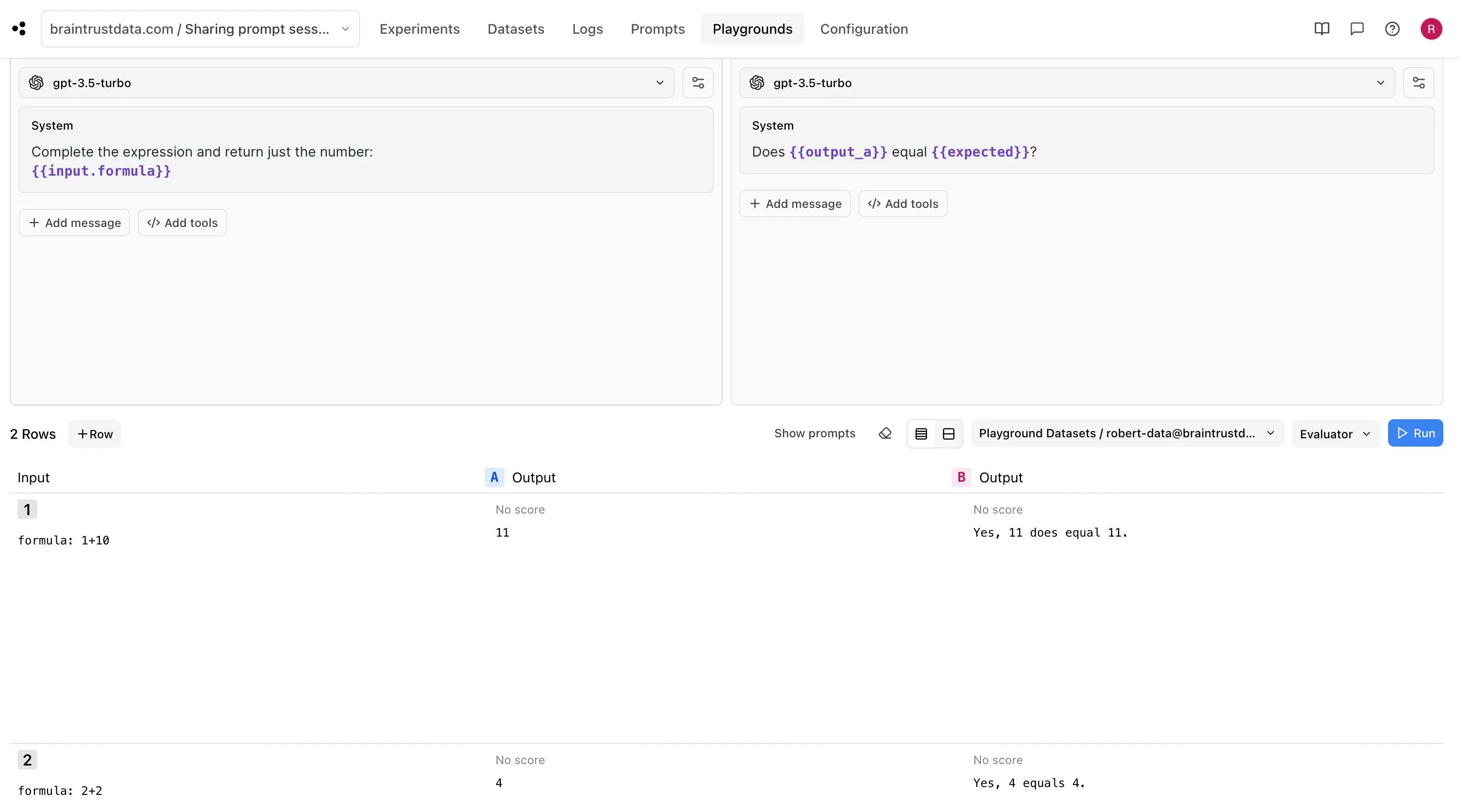

Referencing outputs

Each prompt can reference the output of other prompts in the session (e.g. output_a). This is useful for

validating outputs or chaining prompts together. For example, we can add a grading prompt to the previous example

to verify that the output matches an expected value:

Custom models

To configure custom models, see the Custom models section of the proxy docs. Endpoint configurations, like custom models, are automatically picked up by the playground.